Robotic Imaging: Teaching Machines to See the Unseen

Jack Naylor from the Australian Centre for Robotics (University of Sydney) discusses novel sensors, radiance-based representations, and how computational imaging helps robots make decisions in complex environments. He discusses how unconventional cameras and AI-driven representations allow robots to “see” and navigate challenging environments, such as underwater or in the vacuum of space.

[00:00:27] Robotic imaging is about building new ways for robots to represent their surroundings. Robots are deployed everywhere—from rainy fields and underwater depths to your own living room. To make autonomous decisions without human intervention, they need cameras that see the world differently than a standard lens does.

Radiance-Based Representations

[00:01:31] Jack’s research focuses on radiance-based methods, which model how light behaves in an environment. Instead of a flat 2D photo, his team uses neural networks (AI) to step along rays of light and determine their density and color. This allows robots to understand complex visual phenomena, like reflections on glass or metal, that often confuse traditional sensors.

[00:03:37] Traditional robots can struggle with “shiny” objects. Jack shares an example of a robot crashing into a glass building because it couldn’t distinguish a reflection from an actual opening. By representing these environments at a fundamental level of light sensing, robots can avoid these common pitfalls.

Space Challenges: Docking in the Dark

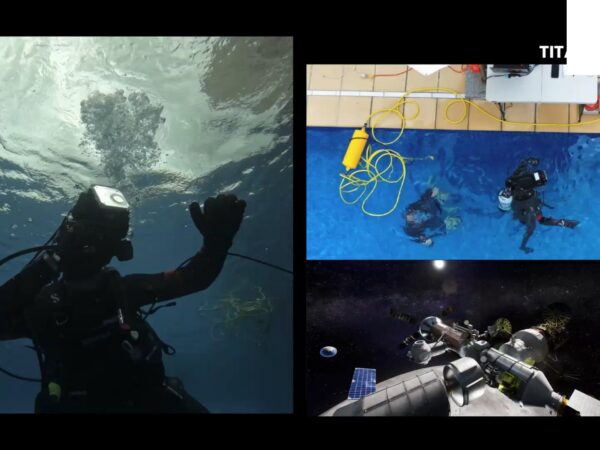

[00:04:01] In space, lighting conditions are extreme. A robot trying to dock with a spacecraft might experience blinding sunlight one second and complete shadow the next. Standard cameras often fail to see critical navigation aids around docking ports in these conditions.

[00:05:31] To solve this, Jack uses event-based sensing. Inspired by biological retinas, these sensors don’t take a standard image; they only measure changes in light. This allows the robot to see the outline of a satellite even in near-total darkness or overwhelming glare, enabling safe, autonomous docking maneuvers.

Biomimicry and New Eyes for AI

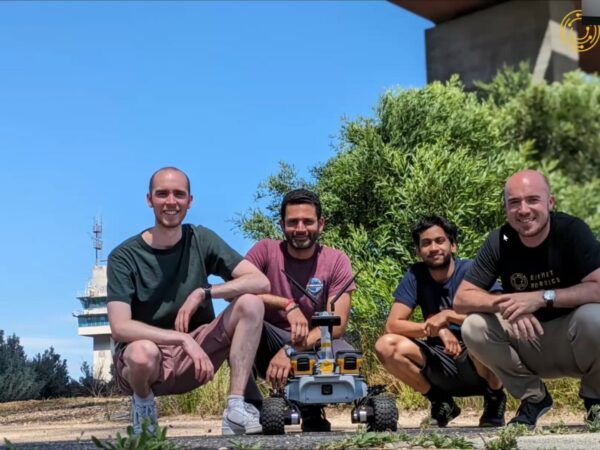

[00:14:21] Jack’s lab designs cameras for specific tasks, essentially doing what evolution has done for thousands of years. For example, **mantis shrimp** use hyperspectral vision to identify prey. By using hyperspectral cameras on rovers, scientists can not only capture images but also measure materials and wavelengths of light invisible to the human eye.

[00:15:12] They also work with light field cameras, which allow images to be refocused after they are taken. This is incredibly useful for looking through obstacles like leaves or floating debris in the ocean.

The Fulbright Journey

[00:16:31] Jack was recently selected as a Fulbright Future Scholar to collaborate with Carnegie Mellon University’s Robotics Institute. This prestigious exchange program, established after WWII, focuses on global collaboration. Jack aims to use this opportunity to share Australia’s robotics research and build international connections to solve the hardest problems in space exploration.

Advice for Students: Passion and Hard Problems

[00:10:55] Jack’s own path began in mechanical engineering and physics. His biggest tip for students is to “give things a go”—even if they aren’t directly related to your current field. “Find the hard problems that your passion can solve,” Jack says, “and set yourself to solving them.”

Home » Articles »